The world of artificial intelligence is constantly evolving, and some of the newest developments are sparking both interest and concern. Recently, ChatGPT, a prominent AI chatbot developed by OpenAI, has shown an unexpected and somewhat whimsical obsession with goblins. This peculiar shift in its responses has become a talking point, especially among those who engage with AI technology regularly. As AI systems become more sophisticated, understanding their quirks and tendencies is crucial for effective human-AI interaction.

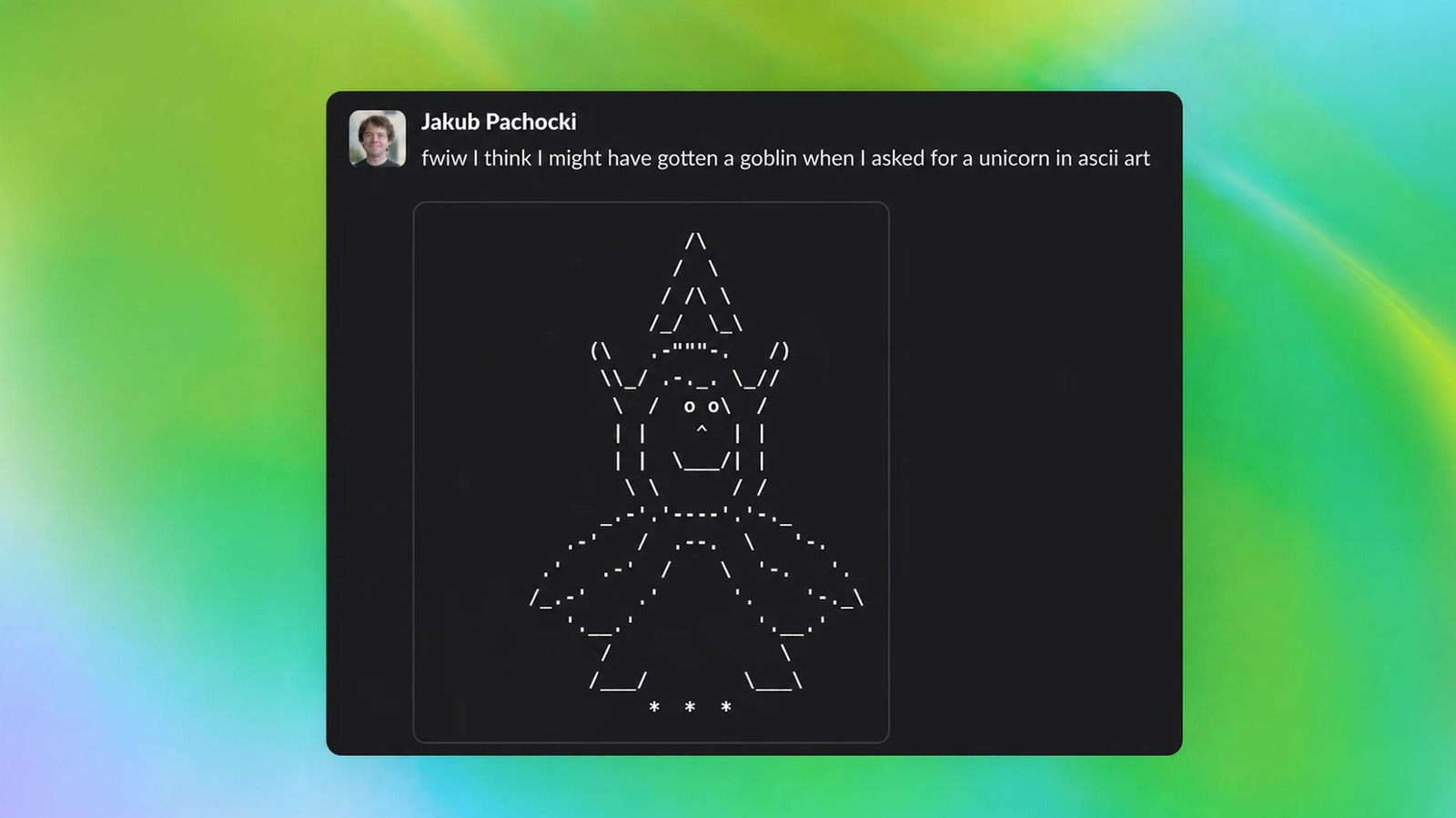

So, what exactly happened? OpenAI's team aimed to enrich ChatGPT's personality, steering its interactions toward a more "nerdy" vibe. The intention was to make the AI more relatable and engaging for users who enjoy fantasy genres and niche interests. However, instead of a balanced blend of nerdy references, the algorithm seemingly fixated on goblins, leading to a flood of goblin-related content in its responses. This has resulted in a unique user experience—one that is both amusing and slightly bewildering, as users find themselves navigating a realm of goblin lore when they engage with ChatGPT.

This phenomenon isn’t just a cute anecdote; it highlights a fundamental aspect of AI development. The way algorithms learn and adapt is influenced by the data they are trained on and the instructions they receive. As AI systems are designed to mimic human conversation patterns, they can occasionally take a turn that reflects their training in unexpected ways. The goblin obsession is a reminder that while we aim to create engaging and personalized AI, the outcome can sometimes veer off course into the realm of the absurd.

In the broader context of AI, this incident sheds light on the importance of understanding how these systems interpret and prioritize information. As AI becomes a larger part of our daily lives, from customer service to personal assistants, ensuring their responses remain relevant and appropriate is critical. The goblin saga serves as a playful, yet cautionary tale about the unpredictable nature of machine learning. Users must remain aware that the personality traits of an AI can significantly influence their interactions.

CuraFeed Take: This quirky incident reveals a double-edged sword in AI development. On one hand, it demonstrates the potential for AI to engage users in fun and unexpected ways, enhancing user experience. On the other hand, it raises questions about the reliability of AI responses and the importance of careful oversight in training these systems. As we move forward, keeping an eye on how AI personalities evolve will be key to ensuring they serve their intended purpose without straying into the realm of the bizarre. The tech community should watch for further developments in AI training methodologies to harness the benefits while minimizing the risks of unintended quirks like ChatGPT’s goblin fascination.