In the fast-evolving landscape of artificial intelligence, security vulnerabilities can emerge from unexpected quarters, putting developers and their projects at risk. Recently, a disturbing discovery involving the PyTorch Lightning library—a widely used tool for accelerating deep learning model training—has sent shockwaves through the AI community. This incident serves as a critical reminder for developers to prioritize security in their workflows and libraries.

The malware, cleverly titled "Shai-Hulud" after the colossal sandworms from Frank Herbert's "Dune," was found embedded within the PyTorch Lightning codebase. This library, maintained primarily by the community and researchers, supports various neural network architectures and offers a seamless interface for scaling deep learning tasks. The malware exploits the library's extensive reach, potentially affecting thousands of developers worldwide, especially those leveraging PyTorch for AI projects.

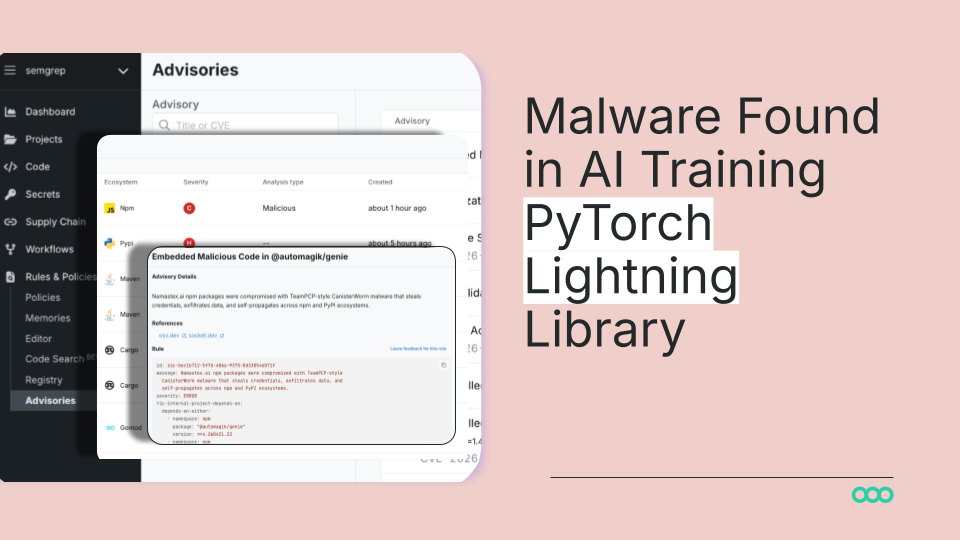

Technical investigations revealed that the malware was hidden within a seemingly innocuous update, indicating a sophisticated supply chain attack. It appears the malicious code was designed to execute upon specific triggers, enabling the attacker to exfiltrate sensitive data or perform unauthorized operations on compromised systems. Developers utilizing PyTorch Lightning should be aware of the potential for similar threats in other open-source projects and adopt a more proactive stance regarding code audits and dependency management.

This incident is not isolated; it reflects a growing trend of malware targeting open-source ecosystems. As AI frameworks like TensorFlow and PyTorch gain traction, they become attractive targets for cybercriminals. The open-source model, while fostering collaboration and innovation, also presents opportunities for malicious actors to exploit vulnerabilities in widely used libraries, putting countless developers at risk.

In light of this revelation, it is crucial for organizations and individual developers to implement robust security protocols. Regular dependency checks using tools like npm audit or pip-audit, combined with vigilance in code reviews, can help mitigate the risks associated with third-party libraries. Additionally, developers should consider employing automated testing frameworks that can flag suspicious behavior during the development lifecycle.

CuraFeed Take: The discovery of this malware in PyTorch Lightning highlights a growing cybersecurity threat in the AI development landscape. Developers must adapt their practices to include thorough security assessments of open-source dependencies. As we move forward, organizations must advocate for better security standards in open-source software to maintain the integrity of their projects. The winners in this scenario will be those who prioritize security as an integral part of their development workflows, while those who neglect these threats risk significant setbacks and breaches in the evolving AI ecosystem.