In the rapidly evolving landscape of artificial intelligence, the ethical considerations surrounding the development and deployment of AI technologies have never been more critical. Recent events have underscored this urgency, as OpenAI's president found himself in a courtroom, reading diary entries that potentially reveal the organization's internal struggles regarding its mission. This incident serves as a stark reminder of the delicate balance between innovation and ethics in AI, a balance that developers and engineers must navigate with increasing scrutiny.

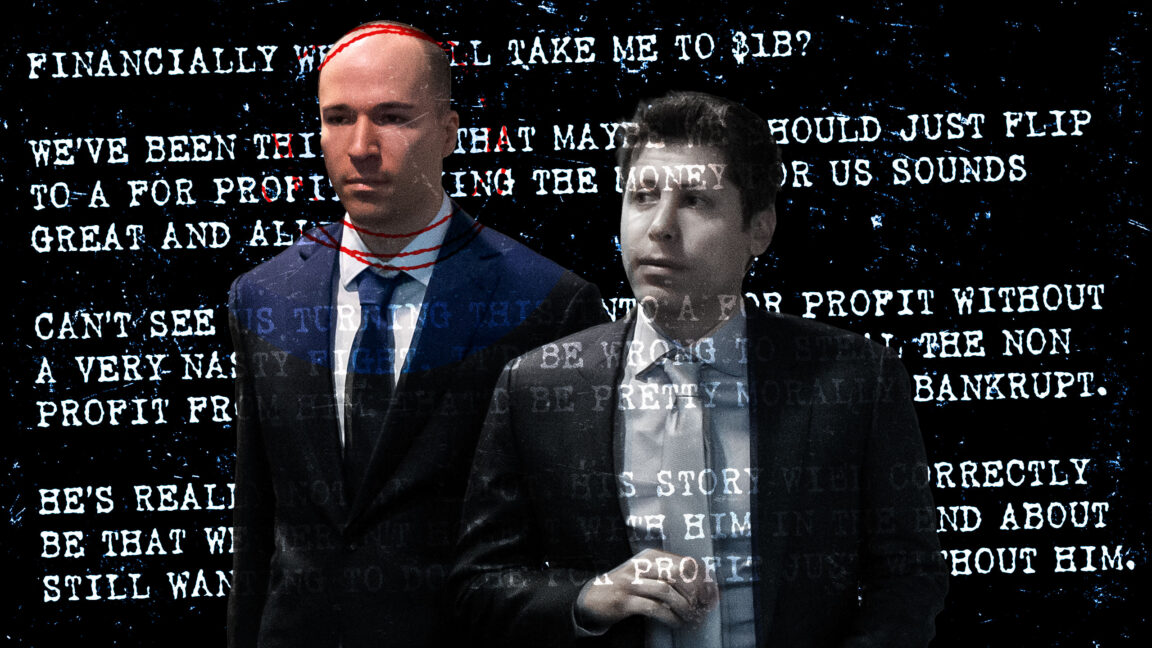

The courtroom drama unfolded as Elon Musk, a co-founder of OpenAI, presented evidence he claims illustrates a departure from the organization's original goals of promoting safe and beneficial AI for humanity. Musk's argument centers on the president's personal reflections, which he suggests indicate a conscious shift in focus from these altruistic intentions to profit-driven motives. This unexpected turn of events highlights the tension between commercial interests and ethical imperatives within the AI sector, a conversation that is particularly relevant as companies increasingly leverage AI technologies for competitive advantage.

During the proceedings, the diary entries were used to support Musk's claim that OpenAI has strayed from its foundational mission. These entries, which detail the president's thoughts and decision-making processes, provide a unique lens through which to examine the internal dynamics at play within a leading AI organization. The implications of this testimony extend beyond OpenAI, as they reflect broader trends in the AI industry where corporate governance, ethical standards, and the drive for profitability often clash. The complexities of aligning AI development with ethical considerations are compounded by the need for transparency and accountability in an increasingly data-driven world.

As developers and engineers, understanding these tensions is vital as we build AI systems that are not only innovative but also responsible. The use of advanced technologies such as machine learning and natural language processing must be balanced with ethical frameworks that prioritize human welfare and societal benefit. The OpenAI case serves as a crucial reminder of the responsibility we hold as creators of these technologies.

The incident surrounding OpenAI is emblematic of a larger struggle in the AI landscape, where the potential for transformative technologies often collides with the ethical implications of their use. As organizations grapple with these challenges, the call for clear ethical guidelines and frameworks becomes ever more pressing. The ongoing public discourse around AI ethics, governance, and the accountability of tech firms is critical as we seek to establish trust in AI systems and ensure their alignment with societal values.

CuraFeed Take: This courtroom spectacle is more than just a legal battle; it is a wake-up call for AI developers. The revelations about OpenAI’s internal conflicts signal that the industry must prioritize ethical considerations in tandem with technical innovation. As AI continues to permeate various sectors, businesses that fail to address these ethical dilemmas may face public backlash and regulatory scrutiny. The next steps for OpenAI and similar organizations will likely involve a reevaluation of their missions and a commitment to transparency that reassures the public about their intentions. Developers should stay attuned to these developments, as they will shape the future landscape of AI and its societal implications.