The transition from academic benchmarking to clinical deployment in medical imaging has exposed a fundamental tension in workflow design. Traditional machine learning pipelines optimize for model performance on held-out test sets, but clinical environments demand something fundamentally different: systems that gracefully handle dataset drift, evolving analytical requirements, and—crucially—the ability to reconstruct every decision for regulatory compliance and scientific validation. A new framework emerging from recent research attempts to resolve this tension by introducing a semantic agent layer that sits between high-level analytical goals and low-level computational execution.

This shift represents a maturation in how the field conceptualizes medical image processing. Rather than viewing workflows as static computational graphs, the proposed approach treats them as adaptive systems that must respond to dataset-specific characteristics while maintaining an immutable audit trail. This distinction matters profoundly: in clinical settings, reproducibility is not merely a scientific virtue—it is often a regulatory requirement. Yet achieving reproducibility without sacrificing adaptability has remained technically elusive.

The core innovation centers on an artifact contract mechanism that formalizes the interface between workflow components. Each intermediate and final output in the pipeline is treated as a first-class semantic object with explicit metadata describing its provenance, computational dependencies, and validity constraints. Rather than passing raw tensors between processing stages, the framework passes artifact objects that encode both data and context. This semantic layer enables the agent to reason about workflow state at a higher level of abstraction than raw numerical arrays.

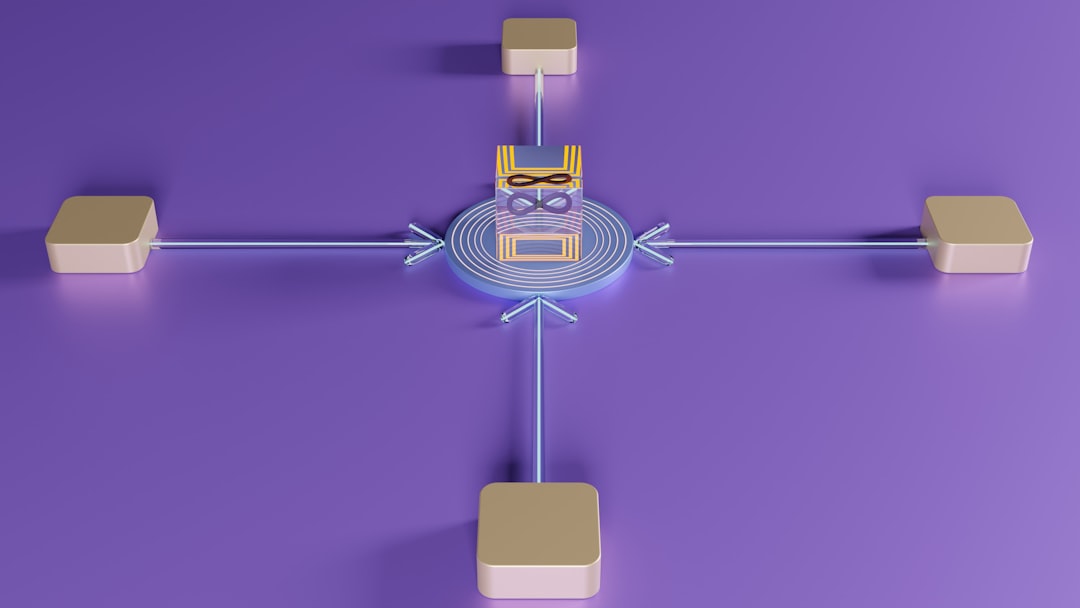

The architecture separates concerns into three distinct layers. The agent layer operates as a goal-conditioned planner that consumes high-level analytical requirements and dataset characteristics, then synthesizes appropriate workflow configurations from a modular rule library. Critically, this layer operates locally (often on CPU or lightweight hardware), avoiding the computational overhead and privacy concerns of centralized orchestration. The executor layer handles deterministic computation graph construction and manages the actual image processing operations. By delegating execution to a separate component, the framework preserves the ability to reconstruct computational graphs exactly as they were originally instantiated—a prerequisite for true reproducibility. The artifact contract layer mediates between these components, ensuring that all information necessary for semantic reasoning is available to the agent while maintaining strict separation between decision-making and computation.

Evaluation on real-world clinical CT and MRI cohorts demonstrates three critical capabilities. First, the framework successfully synthesizes adaptive configurations that respond to dataset-specific conditions—handling variations in image resolution, modality, anatomical coverage, and preprocessing requirements without manual intervention. Second, repeated executions of the same workflow produce bitwise-identical outputs, satisfying stringent reproducibility requirements. Third, the artifact-grounded semantic querying enables researchers to interrogate workflow state and understand precisely which configurations were applied to which data subsets. These results suggest that the apparent conflict between adaptability and reproducibility may be resolvable through proper abstraction of workflow semantics.

The broader significance lies in how this framework reframes the medical imaging pipeline design problem. Rather than choosing between rigid reproducibility and flexible adaptation, the agent-executor separation enables both through semantic mediation. This architectural pattern echoes recent trends in AI systems design—separating reasoning (agent) from execution (deterministic compute)—but applies it specifically to the domain constraints of clinical imaging, where privacy, regulatory compliance, and heterogeneous data are non-negotiable requirements.

CuraFeed Take: This work addresses a genuinely important problem that has received insufficient attention in the ML community. Most published medical imaging research operates in a fantasy world where datasets are homogeneous, requirements are static, and reproducibility is optional. Real clinical deployment shatters all three assumptions. The artifact contract mechanism is elegant because it doesn't require reimagining the underlying computational model—it simply adds a semantic layer that the agent can reason about without compromising deterministic execution. The local-agent design is also strategically smart for healthcare, where centralized cloud orchestration often violates institutional data governance policies. Watch for adoption in healthcare systems that have struggled with the reproducibility-adaptability tradeoff; this framework may finally provide a practical solution. The key limitation to monitor: whether the modular rule library can scale to the full complexity of real clinical workflows, or whether domain-specific customization will ultimately require case-by-case engineering anyway.