The apparent mathematical sophistication of modern language models masks a fundamental uncertainty: are these systems genuinely reasoning about mathematical structures, or have they simply become expert pattern-matchers over billions of tokens containing mathematical notation? This question has grown increasingly urgent as LLMs achieve impressive performance on standardized mathematical benchmarks, yet struggle with novel mathematical constructs and out-of-distribution problems. A new framework proposed in recent research—Math Takes Two—directly confronts this ambiguity by abandoning the comfortable ground of established mathematical conventions entirely.

The core insight driving this work is deceptively simple but profound: if mathematical reasoning truly emerges as a cognitive capability, it should be reconstructible from first principles through communication pressure. Rather than evaluating models on their ability to solve equations expressed in conventional mathematical notation, the researchers designed a scenario where two agents must independently discover that a numerical system solves their coordination problem. This approach mirrors hypotheses from cognitive science suggesting that human mathematical cognition co-evolved with communication needs—our ancestors developed counting and arithmetic partly because shared numerical language enabled trade, resource allocation, and collective problem-solving.

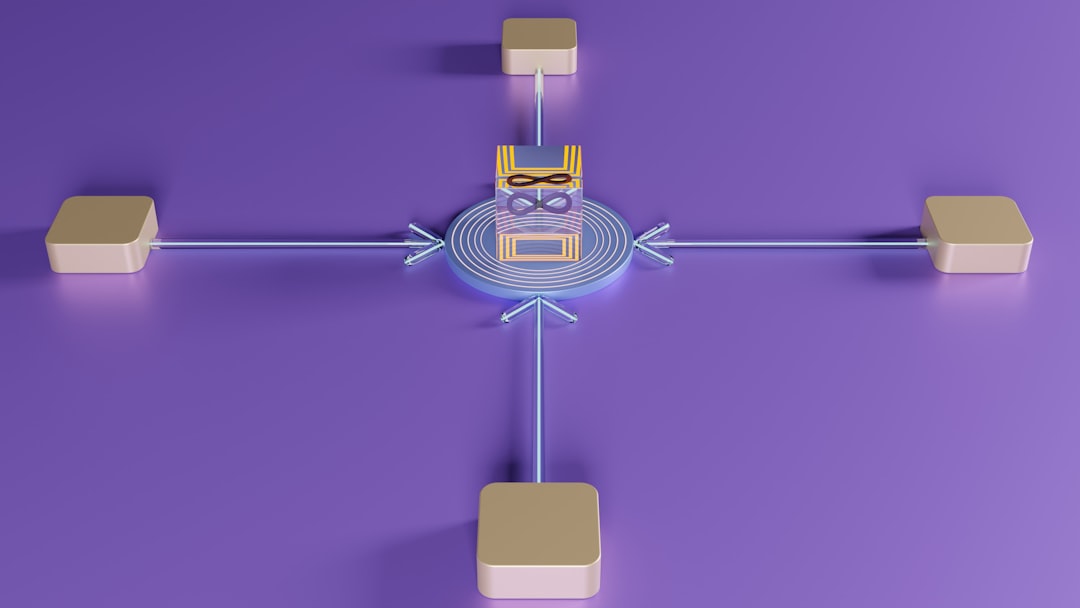

The benchmark architecture centers on a visually grounded task where two agents observe related but not identical scenes. Neither agent possesses predefined mathematical knowledge or symbolic conventions. Success requires the agents to develop a shared communication protocol that encodes numerical relationships, with the critical constraint that extrapolation beyond their training distribution becomes necessary. This design choice is methodologically crucial: it prevents agents from solving the task through simple lookup or memorization of training examples. Instead, they must discover that abstracting quantities into a symbolic system—essentially inventing a numerical representation—provides superior generalization compared to alternative communication strategies.

The technical implementation likely involves multi-agent reinforcement learning with emergent communication, where agents optimize a shared reward signal while developing their communication channel. The key distinction from prior emergent communication work is the explicit focus on whether agents discover mathematical structure specifically—numerical systems with compositional properties (e.g., understanding that "five" can be decomposed into "three plus two"). This requires agents to move beyond arbitrary token sequences toward representations that capture the underlying mathematical relationships governing their environment.

This benchmark addresses a critical gap in current evaluation methodology. Existing mathematical benchmarks—MATH, GSM8K, MMLU, and others—present problems already embedded in formal mathematical language. A model's strong performance might reflect sophisticated parsing of mathematical notation and pattern-matching over similar problems in its training data, rather than genuine reasoning about abstract mathematical concepts. By forcing agents to invent mathematics rather than apply it, Math Takes Two creates conditions where surface-level pattern matching becomes insufficient. An agent cannot succeed by recognizing "this looks like a quadratic equation" if it has never encountered quadratic equations; it must discover that certain symbolic operations solve coordination problems more efficiently than alternatives.

The broader implications ripple across multiple research directions. For mechanistic interpretability researchers, this benchmark offers a controlled environment to study how mathematical reasoning emerges in model activations—what representational changes occur when an agent transitions from random communication to systematic numerical encoding? For alignment researchers, understanding how agents discover formal systems from scratch illuminates whether we can guide or constrain emergent capabilities. For cognitive scientists, the comparison between how artificial agents and humans develop mathematical reasoning under similar constraints provides empirical data for theories of mathematical cognition.

CuraFeed Take: Math Takes Two represents a meaningful methodological shift in how we evaluate mathematical reasoning, but its ultimate impact depends on what the results actually reveal. If current LLMs fail to develop genuine numerical systems in this benchmark despite their stellar performance on conventional math datasets, that's a watershed moment—it would suggest our benchmarks have been measuring something quite different from mathematical understanding. Conversely, if models spontaneously discover compositional numerical systems through agent communication, we'd have stronger evidence that large-scale language modeling genuinely captures mathematical abstraction rather than just statistical regularity. Watch for three things: (1) whether frontier models like GPT-4 or Claude actually develop structured numerical representations versus ad-hoc communication strategies, (2) whether the emergent representations show properties like compositionality and systematic generalization, and (3) how performance scales with model size and training data—if larger models don't automatically develop better mathematical reasoning in this setting, that's telling. The real winner here isn't necessarily the models that perform best, but researchers who use this benchmark to reverse-engineer what mathematical reasoning actually looks like in neural networks, potentially revealing whether we need fundamentally different architectures to capture genuine mathematical cognition.