The computational drug discovery pipeline represents one of the most challenging domains for autonomous AI agents—not because individual tasks are insurmountably difficult, but because success demands flawless orchestration of dozens of heterogeneous tools across sequences of 8 to 50+ steps. A single failure in quality control, parameter validation, or scientific reasoning can invalidate hours of computation. Current large language model-based agents, despite their reasoning capabilities, consistently falter in these high-complexity scenarios, often abandoning structured workflows in favor of ad hoc approaches that fail to capture domain-specific constraints. This limitation has created a genuine bottleneck: the ability to compose, validate, and refine molecular structures through systematic pipelines remains fundamentally beyond contemporary agent architectures.

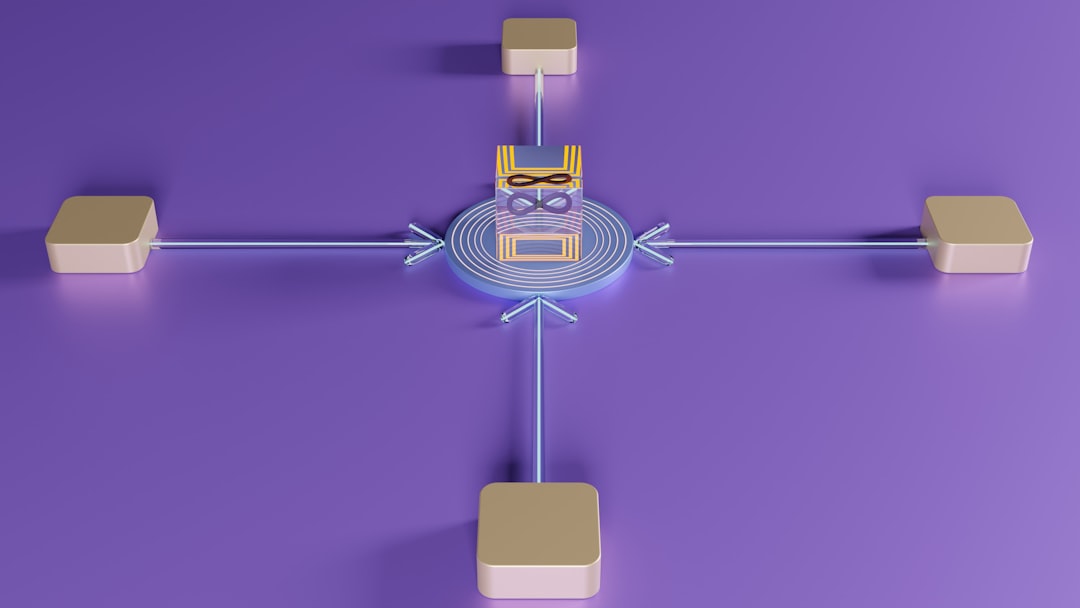

The emergence of MolClaw addresses this gap through a deliberately engineered hierarchical skill framework rather than relying on emergent agent reasoning alone. The architecture stratifies capabilities across three distinct tiers: at the foundation, tool-level skills standardize interactions with atomic operations (molecular property calculations, docking simulations, descriptor generation); at the intermediate layer, workflow-level skills compose these atomic operations into validated, end-to-end pipelines that incorporate quality checkpoints and reflection mechanisms; and at the apex, discipline-level skills embed scientific principles that govern planning decisions and verification procedures across all molecular discovery scenarios. This stratification fundamentally differs from flat skill repositories—it acknowledges that drug discovery requires not just access to tools, but principled understanding of how to sequence them.

The technical implementation integrates over 30 specialized domain resources—spanning molecular dynamics simulators, cheminformatics libraries, docking platforms, and property predictors—into a unified interface. The 70 total skills (distributed across the three tiers) are designed to be composable: workflow-level skills automatically invoke appropriate tool-level skills while maintaining state and implementing quality gates. Critically, the workflow tier incorporates reflection mechanisms that validate intermediate outputs against chemical feasibility constraints, property ranges, and domain-specific heuristics before proceeding. The discipline-level skills operate as meta-controllers, reasoning about task decomposition and verification strategies that align with established medicinal chemistry principles. This architecture essentially embeds domain expertise as structural constraints rather than hoping the agent will learn them through trial and error.

To rigorously evaluate this approach, the researchers introduce MolBench, a benchmark explicitly designed to stress-test workflow orchestration capabilities. Rather than isolated molecular property prediction tasks, MolBench comprises three challenge categories: molecular screening (evaluating large compound libraries against multiple criteria), optimization (iteratively refining molecular structures toward target properties), and end-to-end discovery (complete workflows from initial compound generation through validation). Challenge complexity ranges from 8 sequential tool calls to 50+, creating a spectrum that reveals where agent performance degrades. This benchmark design is methodologically significant—it shifts evaluation away from cherry-picked demonstrations toward realistic, high-complexity scenarios where workflow coherence becomes the limiting factor.

The results validate the core hypothesis: MolClaw achieves state-of-the-art performance across all MolBench metrics, with ablation studies revealing that performance gains concentrate precisely on tasks demanding structured workflows. Notably, performance improvements vanish on simpler tasks solvable through ad hoc scripting, suggesting the hierarchical architecture provides genuine advantage only when sequential dependencies and quality constraints become binding. This finding is methodologically important—it demonstrates that the researchers understand what their approach actually solves, avoiding overclaiming about general agent improvement.

CuraFeed Take: MolClaw represents a maturation in how the AI community approaches domain-specific automation. Rather than scaling foundation models and hoping emergent reasoning solves drug discovery, the work explicitly acknowledges that some domains require structural engineering. The three-tier skill hierarchy is a design pattern that will likely propagate to other sequential, constraint-rich domains—materials science, synthetic biology, process optimization. However, the critical insight from ablation studies cuts deeper: this approach succeeds because it removes decision-making burden from the agent on well-understood problems (workflow composition) while preserving agent reasoning for genuinely novel aspects (parameter selection within validated pipelines). This suggests the next frontier isn't better agents, but better task decomposition that clarifies where agents should decide versus where domain constraints should decide. For practitioners, MolBench becomes the de facto evaluation standard—any future drug discovery agent will be measured against it. For researchers, the question shifts: can this hierarchical skill pattern scale to domains where optimal workflows aren't pre-established? And critically, how much of MolClaw's advantage comes from the architecture versus the engineering effort invested in those 70 carefully-designed skills?